Yin and Yang of the AI Apocalypse

Will We Still Be Here by the Time AI Helps Us Find Our Audience?

Even though I use a lot of technical tools – many of which use AI as part of their functionality, I have been slow/loathe to use Chat GPT to write newsletters or social posts or press kits or anything else nor have I embraced Midjourney to create key art or other graphic design. I tried Chat GPT once but was not impressed with the results – realizing that I’m probably not taking the time to properly learn how to prompt it properly. The masochist in me also seems to prefer agonizing over posts like this than letting a bot spew out sludge. But my aversion primarily stems from my love/hate relationship with technology in general and AI in particular.

Last week Open AI previewed Sora which creates short videos from text prompts. Some of it is quite astonishing and it is remarkable to see how fast AI is developing – and if this is how rapidly it is developing in the creative landscape – I can’t even fathom how it is advancing in other spheres (such as warfare). In related news, this Saturday, the D-Word is co-presenting with Phillip Shane an online workshop Chat GPT for Documentary Filmmakers which I intend to attend even though if that might surprise you after you read the rest of this newsletter.

Ultimately generative AI like all of technology is a tool that we as a society must figure out how to use and regulate properly without letting it destroy our humanity or the planet that sustains us. So far, at least the global north has not been great at controlling technology’s effects. A letter/petition containing policy recommendations made by a notable group of AI scientists one of whom wrote the primary AI textbook used in universities (and Elon Musk) last March posed the following question(s): “We must ask ourselves: Should we let machines flood our information channels with propaganda and untruth? Should we automate away all the jobs, including the fulfilling ones? Should we develop nonhuman minds that might eventually outnumber, outsmart, obsolete and replace us? Should we risk loss of control of our civilization?” To my knowledge, none of the policy recommendations have been put into effect and I don’t have much faith in Congress to do so. What is a bit sad is that only 33,000 people have signed this petition so far – perhaps people are tired of signing online petitions (that never seem to result in action), perhaps people are concerned about being targeted by future robot overlords (it gave me pause) or perhaps people just don’t care and just want their text prompt videos now (or have AI to write their newsletters that Mailchimp keeps prompting me to do)!

Other than the important writer’s and actor’s strikes very little has been done to control AI (go striking workers!). Concerns for independent artists are many – including appropriation (see this latest post from Ted Gioia yesterday on how people (or perhaps sentient bots) are using AI to appropriate his work) or how algorithms are blocking promotion of independent films as Anthony Kaufman points out in this great piece.

While Open AI is not releasing Sora to the public because they recognize these potential dangers – and are instead using a “Red team” to help figure out all the nefarious ways Sora could be used and how to control it. But I have never seen evidence of an industry self-regulating itself for the good of the public and Open AI’s recent leadership struggle kicked those concerned about the abuse of AI out of the company. So I don’t have a lot of faith in that “red team”.

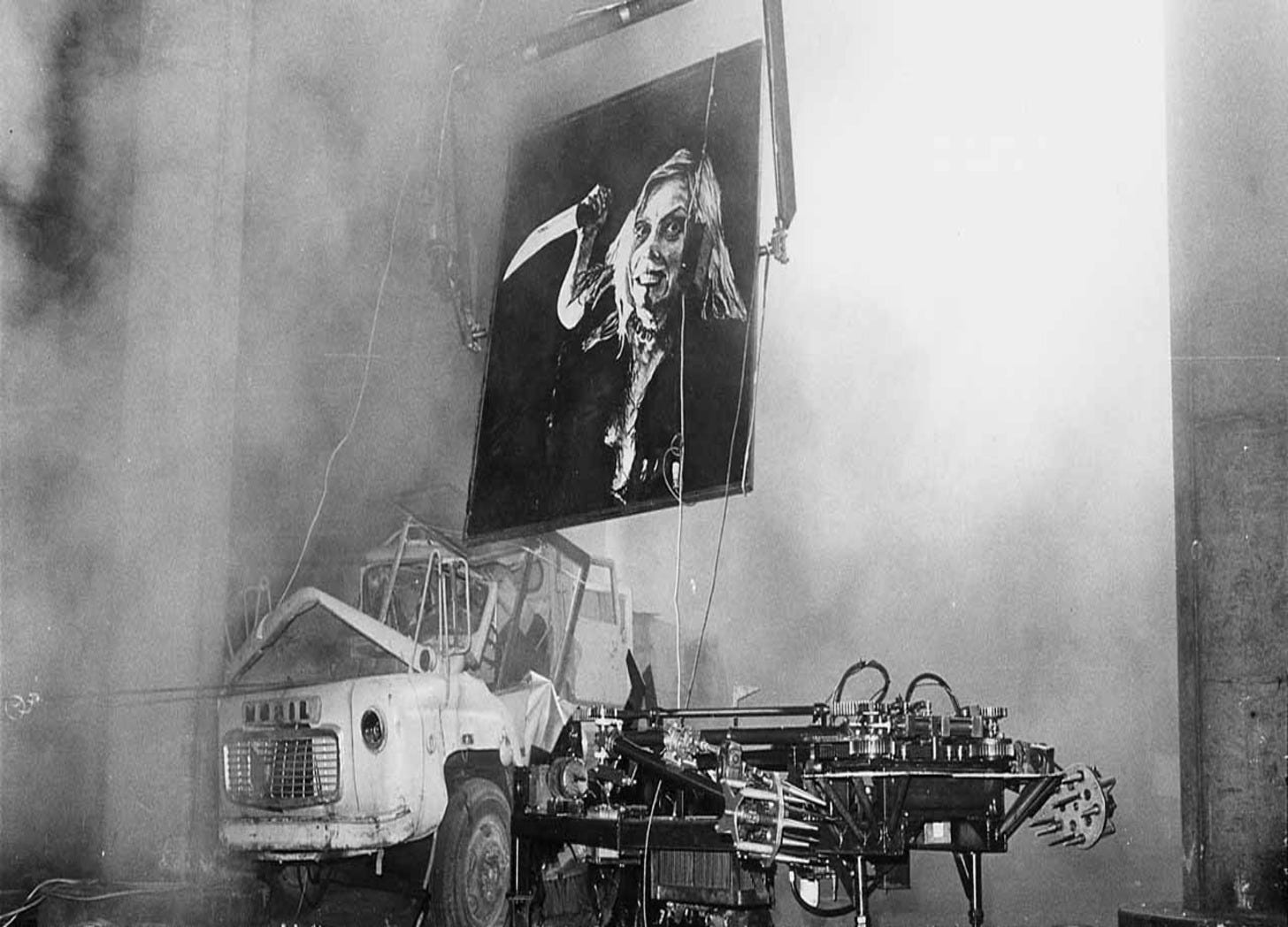

I highly recommend reading or taking a look at Human Compatible: Artificial Intelligence and the Problem of Control by Stuart Russell (the author of the primary AI textbook I mentioned above and one of the primary authors of the letter I mentioned). I had the opportunity to interview Stuart for my documentary about Mark Pauline/Survival Research Laboratories whose work has questioned our relationship with technology since 1978 (this questioning is one of the main reasons that I have devoted much of my creative career working with Mark and SRL and spurred me to make a new documentary about him). This quote from my interview with Stuart is telling: “The King Midas problem is an example of how it is that we lose control of our own creations. Midas asked the gods that everything he touched should turn to gold, and he got exactly what he asked for – but of course his food and his family all turned to gold, and he dies of misery and starvation. That’s how we lose control of super intelligent machines. They pursue the objectives we give them and just like the gods, they pursue them too well. And we don’t know how to turn them off. We don’t know how to stop them. We have to figure out how we retain control over something that’s more intelligent and more powerful than us – forever.” When I look out at humanity I have strong doubts whether we can accomplish this – forever is a long time. (perhaps this is where I should have asked Midjourney to create an image of generative AI as King Midas – but instead I used a photo from a SRL performance above).

This all gives me pause when I consider using these technologies. Using Chat GPT helps to train it. Hence by using it, I am complicit in what evolves from it. I’m a proud Luddite. Luddites were not anti-machine – but were radical workers protesting how machines were being used in “a fraudulent and deceitful manner” to get around standard labor practices”. Sound familiar? But even though I’m a Luddite – I try not to keep my head in the sand – so perhaps I’ll see you Saturday.

My Yang hopes that one day AI will be regulated and be helpful in solving all our societal and planetary ills – and even help us find our audiences and create sustainable careers. My cynical Yin thinks my Yang is out of its mind.